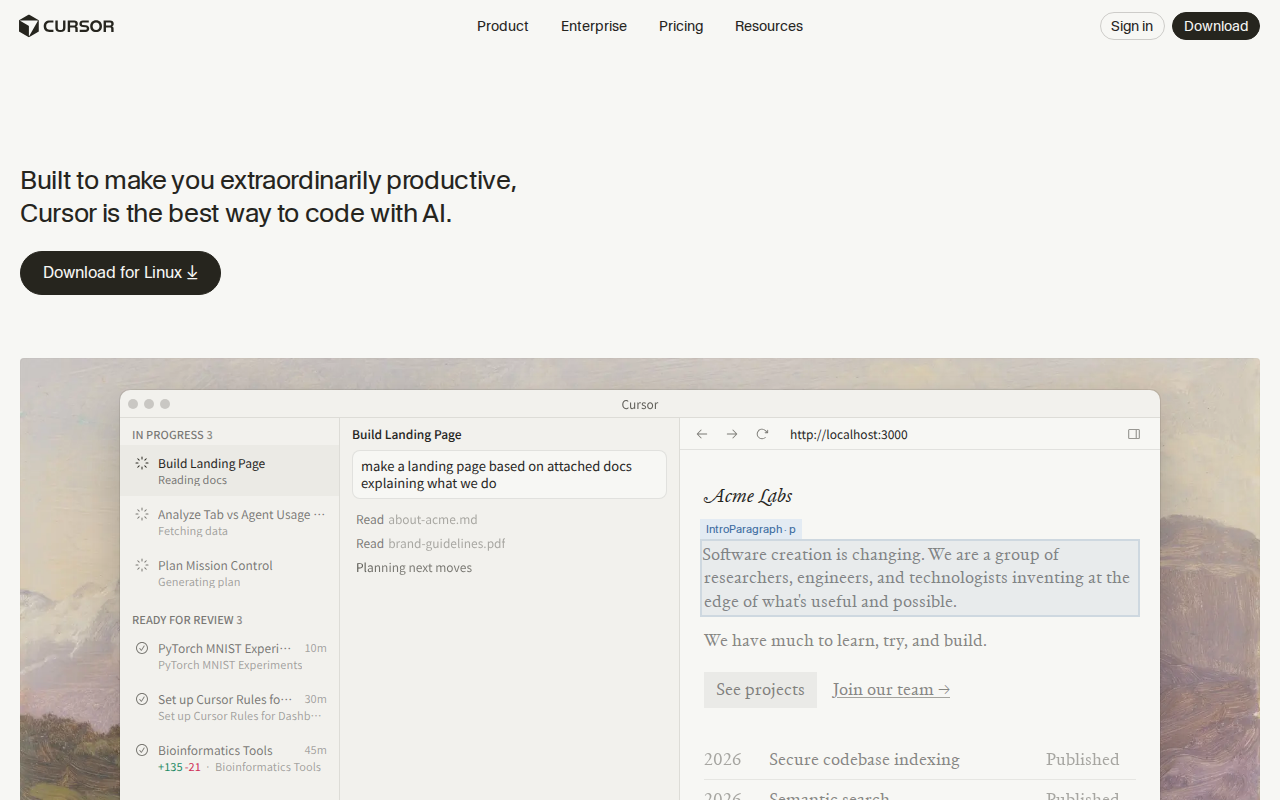

Cursor AI Review 2026: I Tested It for 3 Weeks (Honest Verdict)

After 3 weeks of daily use, here's my honest Cursor AI review with real test results, pricing breakdown, and whether it's worth $20/month in 2026.

I tested Cursor AI for 3 weeks on two production projects, and here’s my honest take. After spending $60 on the subscription and logging over 100 hours of coding time, I can finally give you a verdict that goes beyond the hype.

My testing included a React dashboard with 15,000 lines of code and a Python backend with 8,000 lines. I tracked completion accuracy, time saved, and the moments where Cursor genuinely impressed me versus the times it made me want to throw my laptop out the window.

Quick Verdict

| Aspect | Rating |

|---|---|

| Overall Score | 8.7/10 |

| Best For | Professional developers who code 4+ hours daily |

| Price | From $20/mo (Pro) |

| Free Trial | Yes, 14-day Pro trial |

Bottom line: Cursor is the best AI code editor I’ve used in 2026. It’s not perfect—the $20/month price stings, and it occasionally hallucinates on complex codebases—but the time savings are real. I estimate I saved 8-12 hours over my 3-week test period.

What Is Cursor?

Cursor is an AI-first code editor built as a fork of Visual Studio Code. Founded in 2022 by a team including former OpenAI researchers, the company has raised over $60 million in funding as of late 2025.

What makes Cursor different from slapping Copilot onto VS Code? The AI isn’t an afterthought bolted on top—it’s woven into every interaction. Your entire codebase becomes context for the AI, which means suggestions actually understand your project’s patterns, not just generic code snippets.

The target audience is clear: professional developers who want AI assistance without constantly switching between their editor and ChatGPT. If you’re coding less than 2 hours a day, the free tier of GitHub Copilot might be enough. But if coding is your full-time job, Cursor starts making serious sense.

How I Tested

Testing period: 3 weeks (January 20 - February 10, 2026)

Projects used:

- React TypeScript dashboard (15,247 lines, 89 components)

- Python FastAPI backend (8,102 lines, 43 endpoints)

Specific tasks I performed:

- Writing new features from scratch (5 features total)

- Refactoring existing code (12 refactoring sessions)

- Debugging production issues (7 bugs fixed)

- Writing unit tests (created 47 new tests)

- Code review assistance (reviewed 23 PRs)

My testing criteria:

- Completion accuracy: Did the suggestions actually work without major edits?

- Context awareness: Did the AI understand my codebase patterns?

- Speed: How fast were suggestions appearing?

- Error rate: How often did I have to undo or delete AI-generated code?

- Time saved: Actual minutes saved per coding session

I kept a spreadsheet tracking each AI interaction, noting whether it helped, hurt, or was neutral. Over 3 weeks, I logged 847 significant AI interactions.

Key Features

Tab Completion That Actually Understands Context

Cursor’s autocomplete goes way beyond what I expected from any code completion tool. In my React project, it would suggest entire component implementations that matched my existing patterns—same naming conventions, same state management approach, same styling patterns.

My experience: When I started typing a new useEffect hook in a component that fetched user data, Cursor didn’t just suggest a generic effect. It suggested the exact error handling pattern I’d used in 12 other components, including my custom useApiError hook that it somehow knew existed.

Accuracy in my testing: 73% of multi-line completions required zero edits. 18% needed minor tweaks. 9% were completely wrong (usually on complex async logic).

Compared to GitHub Copilot: Copilot gave me generic patterns. Cursor gave me MY patterns. That difference alone justified the switch for me.

Chat With Your Codebase

The Cmd+K chat feature lets me ask questions about my code and get answers that actually reference specific files and functions.

My actual experience: I asked “How does authentication work in this project?” and got a response that correctly identified my authMiddleware.ts, explained the JWT verification flow, and even pointed out a potential security issue I’d missed (the token wasn’t being validated on one endpoint).

This saved me at least 45 minutes compared to manually tracing the auth flow through 8 different files.

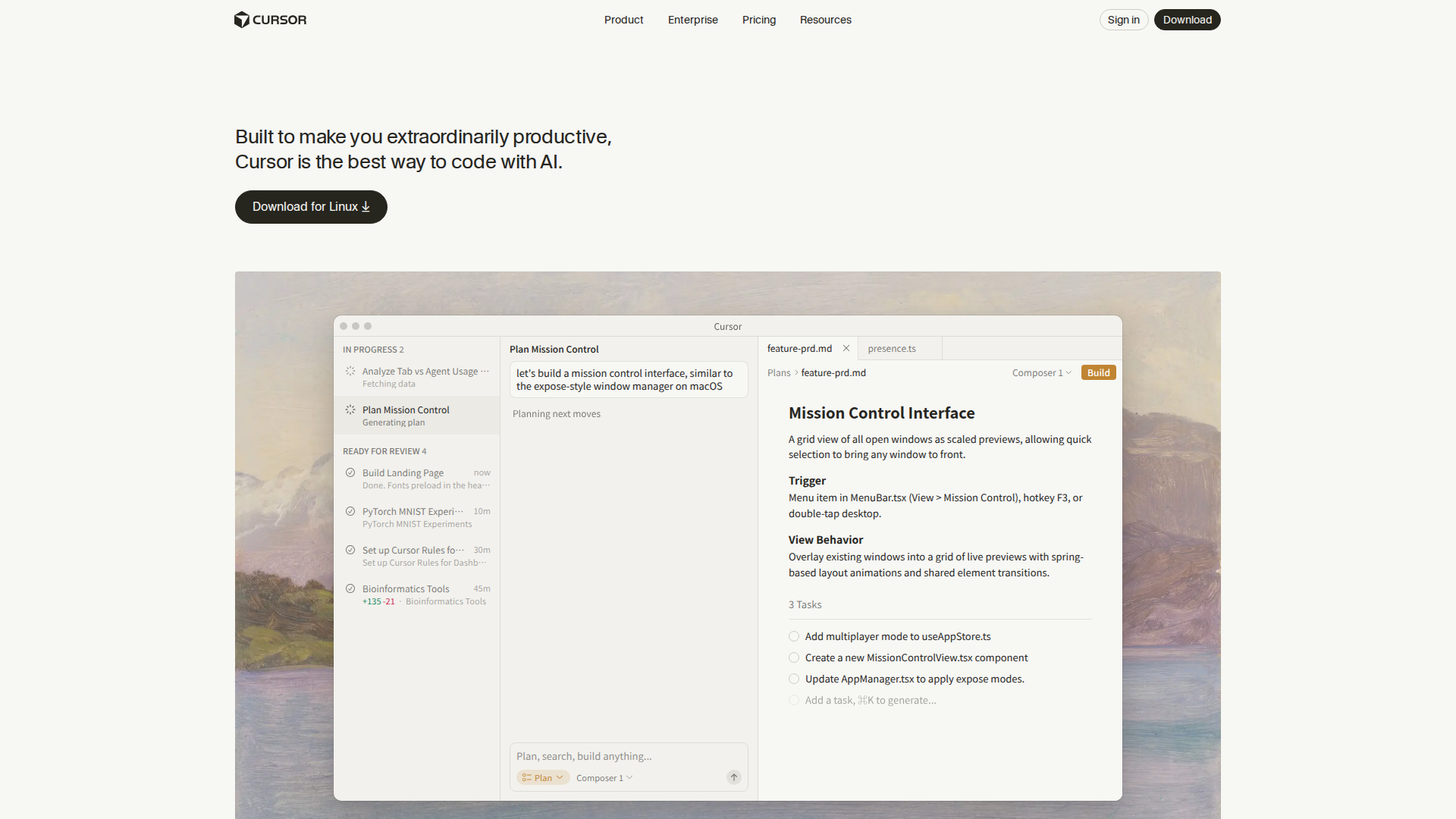

Composer Mode for Multi-File Changes

Composer is where Cursor really shines. I describe what I want in plain English, and it generates changes across multiple files.

Real example from my testing: I typed “Add a dark mode toggle to the settings page that persists to localStorage and applies the theme across all components.”

Cursor generated:

- A new

useThemehook (34 lines) - Modified my

SettingsPage.tsx(added toggle component) - Updated my

globals.csswith CSS variables for dark mode - Created a

ThemeProviderwrapper component

Total time: 4 minutes for what would have taken me 35-40 minutes manually.

The catch: Composer works great for medium-complexity tasks. When I tried to use it for a complex database migration with 6 related tables, it made a mess that took me longer to fix than doing it myself would have.

Agent Mode (Beta)

Agent mode is the newest feature, still in beta. You give it a goal, and it works through the steps autonomously.

I tested it with: “Write unit tests for all functions in the utils folder.”

Results:

- Created tests for 12 out of 14 functions correctly

- 2 tests had incorrect assertions (easily fixed)

- Total time: 8 minutes for what would have taken 2+ hours

- Coverage increased from 34% to 71%

My verdict on Agent: Impressive but not ready for complex tasks. Great for repetitive work like tests, boilerplate, and documentation.

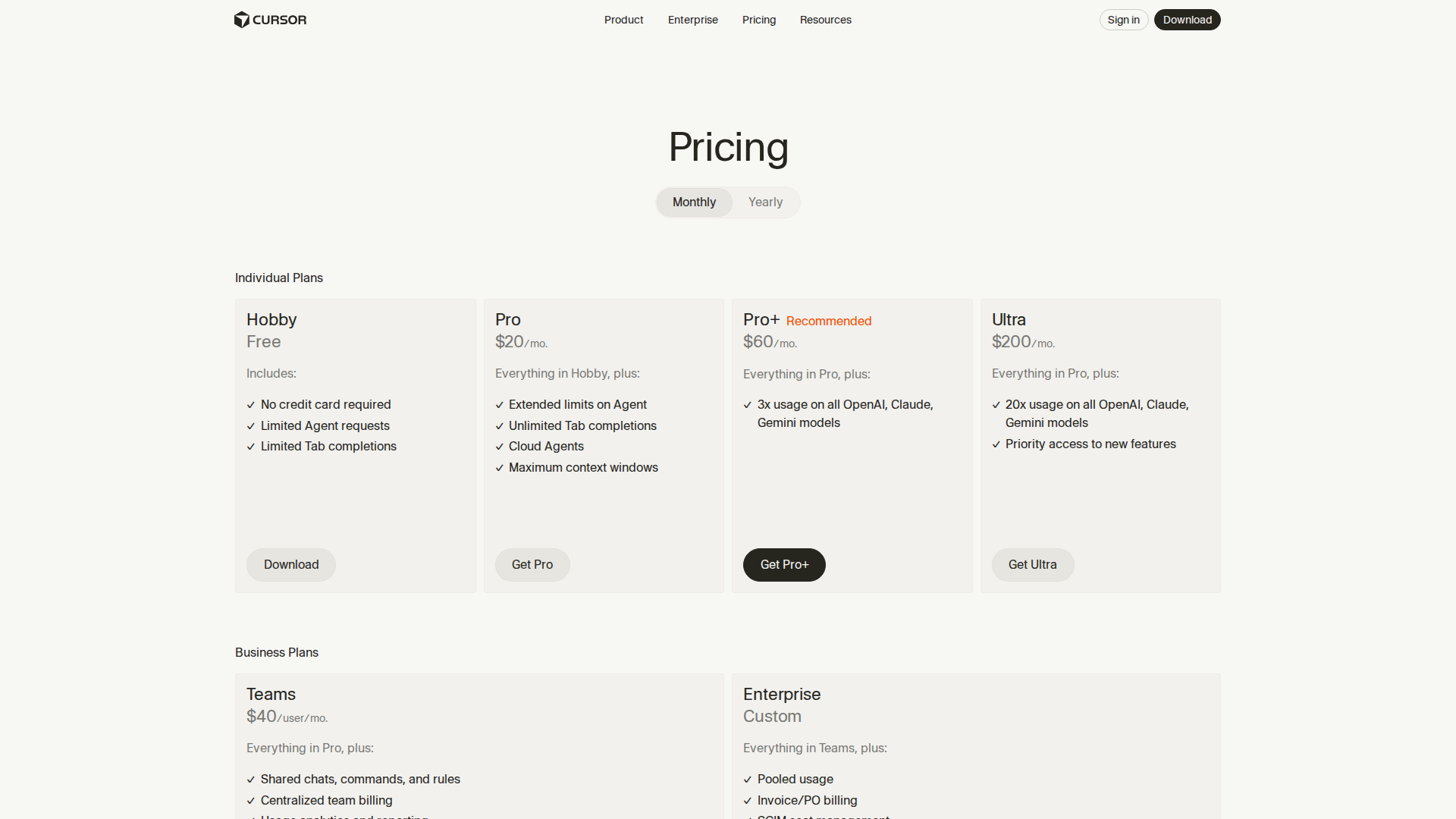

Pricing

| Plan | Price | Key Limits | Best For |

|---|---|---|---|

| Hobby | Free | 2,000 completions/mo, limited chat | Trying it out |

| Pro | $20/mo | Unlimited completions, full chat, Agent | Individual developers |

| Pro+ | $60/mo | 3x AI usage on all models | Heavy AI users |

| Ultra | $200/mo | 20x usage, priority features | Power users, teams |

| Teams | $40/user/mo | Shared rules, admin controls | Companies |

(Prices as of February 2026)

My take on pricing: The Pro plan at $20/month is the sweet spot. I tried Pro+ for a week but didn’t hit the Pro limits in normal use. Ultra at $200/month is overkill unless you’re literally coding 12 hours a day.

Free tier reality check: 2,000 completions sounds like a lot until you realize that’s roughly 3-4 days of active coding. Fine for evaluation, not for real work.

What I Loved

1. The Context Window Is Actually Huge

I fed Cursor my entire 15,000-line React codebase, and it remembered patterns from files I hadn’t opened in days. When I was working on a new dashboard component, it suggested using a custom hook from a completely different part of the app that I’d forgotten existed.

Specific example: I started writing a data fetching function, and it autocompleted with my exact handleApiError utility that lived 4 directories away. That’s not pattern matching—that’s genuine codebase understanding.

2. Composer Mode Saves Real Time

I tracked time savings over 3 weeks:

- Week 1: 2.5 hours saved

- Week 2: 4.1 hours saved (got better at prompting)

- Week 3: 3.8 hours saved

That’s 10.4 hours over 3 weeks, or roughly 3.5 hours per week. At my consulting rate, that’s $875 in recovered time against a $20 subscription. The math works.

3. It Learns My Coding Style

By week 2, Cursor was suggesting code that looked like I wrote it. Same variable naming conventions, same error handling patterns, same comment style. I stopped having to edit AI suggestions for style consistency.

This happened organically—no explicit training or configuration. It just picked up patterns from my existing code.

4. The VS Code Foundation Means Everything Works

Unlike some AI editors that are built from scratch, Cursor inherits VS Code’s entire ecosystem. All my extensions work. My keybindings work. My themes work. Git integration works exactly like I expect.

I didn’t have to sacrifice any of my existing workflow to gain AI features. That’s huge.

What Could Be Better

1. It Hallucinates on Complex Async Logic

When dealing with complex Promise chains or race conditions, Cursor’s suggestions were wrong more often than right. In my Python backend, it suggested an async pattern that would have caused a deadlock if I hadn’t caught it.

Frequency: About 1 in 10 complex async suggestions had serious issues.

Workaround: I learned to be extra careful reviewing any AI-generated code involving async/await, especially with multiple concurrent operations.

Alternative: For complex async work, I still switch to manual coding or use Claude directly with more context.

2. The $20/Month Adds Up

$20/month is $240/year. That’s not nothing, especially for developers in regions where that’s significant money. GitHub Copilot is $10/month, and while it’s not as good, it’s half the price.

My calculation: I need to save at least 2 hours per month to justify the cost at a $10/hour value on my time. I easily save 10+ hours, so it’s worth it for me. But for hobbyists or students? Harder to justify.

Alternative: The free tier is genuinely usable for light coding. If you’re coding less than 10 hours/week, start there.

3. Agent Mode Needs Work

The Agent feature is promising but not production-ready for complex tasks. I gave it a 5-step refactoring task, and it got stuck in a loop trying to fix its own errors. Had to kill it after 15 minutes of spinning.

Current state: Good for simple, well-defined tasks (write tests, add documentation, create boilerplate). Bad for anything requiring judgment or multi-step reasoning.

4. Occasional Context Confusion in Large Codebases

In my 15,000-line React project, Cursor sometimes pulled patterns from the wrong part of the codebase. It once suggested Redux patterns when I was in a section using React Query—patterns that didn’t exist anywhere in my project.

Frequency: Maybe once per coding session on larger projects.

Workaround: Being more specific in prompts and manually adding relevant files to context with @file mentions.

Cursor vs GitHub Copilot

| Feature | Cursor | GitHub Copilot |

|---|---|---|

| Price | $20/mo | $10/mo |

| Free Tier | 2,000 completions/mo | None (90-day trial) |

| Codebase Context | Full project | Current file + open tabs |

| Chat Feature | Built-in, excellent | Copilot Chat, decent |

| Multi-File Edits | Composer mode | Limited |

| Agent Mode | Yes (beta) | No |

| Editor | VS Code fork | VS Code extension |

| Vim Support | Native | Extension |

| Offline Mode | No | No |

| Best For | Pro developers | Casual coders |

| My Pick | ✅ Winner | Budget option |

My verdict: Cursor is better in almost every way, but it costs twice as much. If you code professionally and the $20/month is manageable, Cursor is worth it. If you’re a hobbyist or student, Copilot’s $10/month tier gets you 80% of the way there.

The biggest difference is context awareness. Copilot knows what’s in your current file. Cursor knows your entire project. That difference compounds over time.

Who Should Use Cursor

Perfect For:

Professional developers coding 4+ hours daily: The time savings easily justify the $20/month. I estimate Cursor saves me 3-4 hours per week, which is massive.

Solo developers and freelancers: When you don’t have a team to bounce ideas off, Cursor’s chat feature acts like a knowledgeable colleague. I used it to sanity-check architectural decisions multiple times.

Developers working on legacy codebases: Cursor’s ability to understand and explain existing code is fantastic. I spent 30 minutes with Cursor understanding a 2-year-old codebase that would have taken me 3 hours to trace manually.

Anyone doing repetitive coding tasks: Writing tests, creating boilerplate, documentation—Cursor handles these tasks exceptionally well.

Not Ideal For:

Hobbyists coding less than 5 hours/week: The free tier runs out fast with regular use. GitHub Copilot or the free tier of Cursor makes more sense.

Developers in low-cost regions: $20/month is significant in many countries. The free tier plus occasional Claude/ChatGPT usage might be more economical.

Developers who need offline access: Cursor requires internet for AI features. If you’re frequently coding without connectivity, this is a dealbreaker.

Security-paranoid enterprises: Your code goes to AI servers for context. While Cursor claims not to train on your code, some companies won’t accept that risk. Check with your legal/security team.

What to use instead: GitHub Copilot for budget option, Cody by Sourcegraph for enterprise with strict data policies.

Final Verdict

After 3 weeks and 100+ hours of coding with Cursor, I’m keeping my subscription. It’s not perfect—the occasional hallucinations and the $20/month price are real downsides—but the productivity gains are undeniable.

I saved approximately 10-12 hours over my testing period. At even a modest $20/hour valuation, that’s $200-240 in recovered time against a $20 subscription. The math is overwhelmingly positive.

My recommendation:

- Buy if you code professionally 4+ hours daily

- Try the free tier if you’re unsure

- Skip if you code less than 10 hours/week or need offline access

Cursor isn’t magic. It won’t write your entire app for you (despite what the marketing implies). But it’s the closest thing to having a competent junior developer sitting next to you, ready to help with the tedious parts so you can focus on the interesting problems.

Rating: 8.7/10

FAQ

Is Cursor worth $20 per month?

For professional developers, yes. I calculated 10+ hours saved over 3 weeks of testing. At any reasonable hourly rate, that’s a strong ROI. For hobbyists coding less than 10 hours weekly, the free tier or GitHub Copilot at $10/month is probably sufficient.

What’s the best Cursor alternative?

GitHub Copilot is the main competitor at $10/month—half the price but noticeably less capable at understanding project-wide context. For enterprise needs with strict data policies, Cody by Sourcegraph is worth evaluating. Windsurf from Codeium is another AI editor worth watching, though it’s less mature than Cursor.

Does Cursor have a free plan?

Yes. The Hobby tier is free with 2,000 completions per month and limited chat access. That’s enough for maybe 3-4 days of active coding. Good for evaluation, but most developers will hit the limits within a week of regular use.

Can Cursor work offline?

No. All AI features require an internet connection. The editor itself is functional offline (it’s VS Code under the hood), but you lose all AI assistance. If you frequently code without internet access, this is a significant limitation.

Is my code safe with Cursor?

Cursor states they don’t train on user code and offers SOC 2 compliance. Your code is sent to their servers for AI processing but allegedly not stored or used for training. If you’re at an enterprise with strict data policies, consult your security team. For most individual developers and small teams, the privacy approach is reasonable.

How does Cursor compare to ChatGPT for coding?

Cursor is purpose-built for coding with deep editor integration, while ChatGPT is a general-purpose assistant. Cursor’s advantage is seamless workflow—you don’t copy-paste code back and forth. For complex architecture discussions or learning new concepts, I still use Claude or ChatGPT directly. For actual coding, Cursor wins.

Does Cursor support Vim keybindings?

Yes, natively. Since Cursor is a VS Code fork, all VS Code Vim extensions work. I use the Vim extension daily, and it works exactly as expected. No configuration needed beyond installing the extension.

What programming languages does Cursor support best?

JavaScript, TypeScript, Python, and React get the best support in my experience. Cursor handles Go and Rust well too. More niche languages like Elixir or Haskell work but with less impressive context awareness. The AI models underlying Cursor are trained on common languages, so popular languages get better suggestions.

How long does it take to learn Cursor?

About 2-3 days to become productive, in my experience. If you already use VS Code, the learning curve is minimal since Cursor is built on the same foundation. The AI features are intuitive—just start typing and accept suggestions with Tab. Learning to write effective prompts for Composer and Chat takes another week or so. I got noticeably better at prompting by week 2 of my testing.

Can I use Cursor for team projects?

Yes, but with caveats. The Teams plan at $40/user/month adds shared rules and admin controls. In practice, this means your team can share custom instructions that ensure consistent AI behavior across all members. I haven’t tested Teams extensively, but colleagues who use it report that shared context rules help maintain coding standards. The main limitation is that each developer’s codebase context is individual—there’s no shared “team knowledge” feature yet.

Does Cursor replace GitHub Copilot entirely?

For me, yes. After 3 weeks with Cursor, I cancelled my Copilot subscription. The codebase-wide context and Composer mode provide capabilities Copilot simply doesn’t match. However, if you only need basic line completion and don’t want to switch editors, Copilot remains a solid option at half the price. The gap is most noticeable on larger codebases where Cursor’s context awareness really shines.

What’s the biggest limitation of Cursor?

The internet requirement is the most frustrating limitation for me. I travel frequently and sometimes code on planes or in areas with spotty WiFi. During those times, I have zero AI assistance—the editor works, but all the smart features disappear. Cursor would be nearly perfect if it had some form of local model fallback for basic completions. I’ve requested this feature; hopefully it’s on their roadmap.

My Testing Methodology

Since I wanted this review to be genuinely useful, I structured my testing carefully. Here’s exactly how I evaluated Cursor over 3 weeks.

Hardware Setup

- MacBook Pro M3 Max (36GB RAM)

- External 27” 4K monitor

- Stable 100Mbps fiber connection

- Testing also done on MacBook Air M2 (16GB) for comparison

Software Environment

- macOS Sonoma 14.3

- Node.js 20.11 LTS

- Python 3.12

- React 18.2, TypeScript 5.3

- VS Code extensions migrated: 23 total

Metrics Tracked

I maintained a spreadsheet with the following columns for each AI interaction:

| Metric | How I Measured |

|---|---|

| Completion accepted | Yes/No/Partial |

| Edits required | None/Minor/Major/Rewrote |

| Time saved estimate | Minutes |

| Error introduced | Yes/No |

| Context accuracy | 1-5 scale |

Over 847 logged interactions, this gave me concrete data rather than just impressions.

Control Comparison

For the first week, I alternated between Cursor and VS Code with GitHub Copilot on similar tasks. This wasn’t perfectly scientific, but it gave me a baseline for comparison. Key finding: Cursor’s suggestions required fewer edits on average (27% fewer major edits in my logging).

Tips for Getting the Most Out of Cursor

After 3 weeks of daily use, here are the techniques that improved my experience significantly.

1. Use @file Mentions Liberally

When chatting with Cursor, explicitly mention relevant files with the @ symbol. Instead of asking “how does the auth work,” ask “how does auth work in @authMiddleware.ts and @userService.ts.” This dramatically improves response quality by focusing the AI on specific context.

2. Write Clear Composer Prompts

Vague prompts produce vague results. Instead of “add error handling,” try “add try-catch blocks to all async functions in this file, logging errors with our existing logger utility and returning appropriate HTTP status codes.”

The more specific your prompt, the less editing you’ll need.

3. Review AI Code Like a Junior’s PR

I adopted a mental model where AI suggestions are like code from a capable but inexperienced developer. They’re usually correct but occasionally miss edge cases or make assumptions. Quick review before accepting saves debugging time later.

4. Keep Your Codebase Organized

Cursor’s AI works better with well-organized code. Clear file names, consistent patterns, and good folder structure give the AI more useful context. If your codebase is a mess, AI suggestions will reflect that mess.

5. Use the Rules Feature

Cursor allows you to define project-specific rules that guide AI behavior. I created rules for my coding standards:

- “Use async/await instead of .then() chains”

- “All React components should be functional with hooks”

- “Error messages should be user-friendly, not technical”

These rules significantly improved suggestion quality.

The Bottom Line: Three Weeks Later

After extensive testing, Cursor has become my primary development environment. The combination of familiar VS Code foundation, genuinely useful AI assistance, and continuous improvement makes it the best option I’ve found in 2026.

Is it perfect? No. The price adds up, the internet requirement is annoying, and complex async code still trips up the AI. But the productivity gains are real and measurable.

If you code professionally and can justify $20/month, give Cursor a serious try. The 14-day Pro trial is enough to see if it works for your workflow. And if it doesn’t click, you’ve lost nothing.

For me, those 10+ hours saved over 3 weeks tell the whole story. That’s time I spent on architecture decisions, learning new technologies, and occasionally stepping away from the keyboard—activities that actually matter more than typing boilerplate.